The boss of the company behind ChatGPT has said it might consider leaving the EU if it fails to comply with a planned law on artificial intelligence (AI).

The EU’s planned legislation could be the first to specifically regulate AI.

And it could require generative AI companies to reveal which copyrighted material had been used to train their systems to create text and images.

“The current draft of the EU AI Act would be over-regulating,” OpenAI’s Sam Altman said, Reuters reported.

“But we have heard it’s going to get pulled back.”

Many in the creative industries accuse AI companies of using the work of artists, musicians and actors to train systems to imitate their work.

But Mr Altman is worried it would be technically impossible for OpenAI to comply with some of the AI Act’s safety and transparency requirements, according to Time magazine.

At an event at University College London, Mr Altman added he was optimistic AI could create more jobs and reduce inequality.

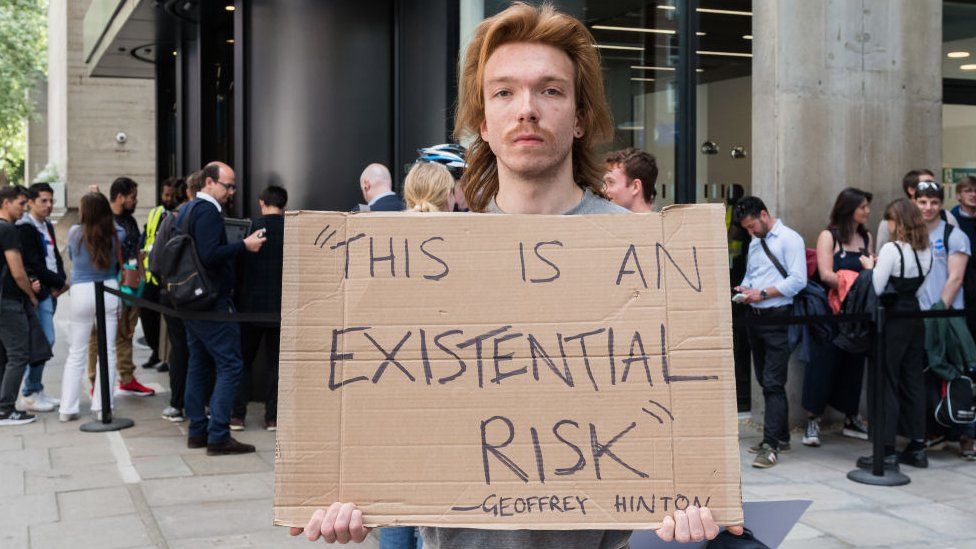

He also met Prime Minister Rishi Sunak and the heads of AI companies DeepMind and Anthropic to discuss the technology’s risks – from disinformation to national security and even “existential threats” – and the voluntary actions and regulation required to manage them.

Some experts fear super-intelligent AI systems could threaten humanity’s existence.

But Mr Sunak said AI could “positively transform humanity” and “deliver better outcomes for the British public, with emerging opportunities in a range of areas to improve public services”.

At the G7 summit in Hiroshima, the leaders of the US, UK, Germany, France, Italy, Japan and Canada agreed creating “trustworthy” AI must be “an international endeavour”.

And before any EU legislation comes into effect, the European Commission aims to develop an AI pact with Google’s parent company, Alphabet.

International cooperation is vital to regulating AI, according to EU industry chief Thierry Breton, who met with Google chief executive Sundar Pichai in Brussels.

“Sundar and I agreed that we cannot afford to wait until AI regulation actually becomes applicable – and to work together with all AI developers to already develop an AI pact on a voluntary basis ahead of the legal deadline,” Mr Breton said.

Silicon Valley veteran, author and O’Reilly Media founder Tim O’Reilly said the best start would be mandating transparency and building regulatory institutions to enforce accountability.

“AI fearmongering, when combined with its regulatory complexity, could lead to analysis paralysis,” he said.

“Companies creating advanced AI must work together to formulate a comprehensive set of metrics that can be reported regularly and consistently to regulators and the public, as well as a process for updating those metrics as new best practices emerge.”

Source: BBC